The pros and cons of Google's latest AI breakthrough

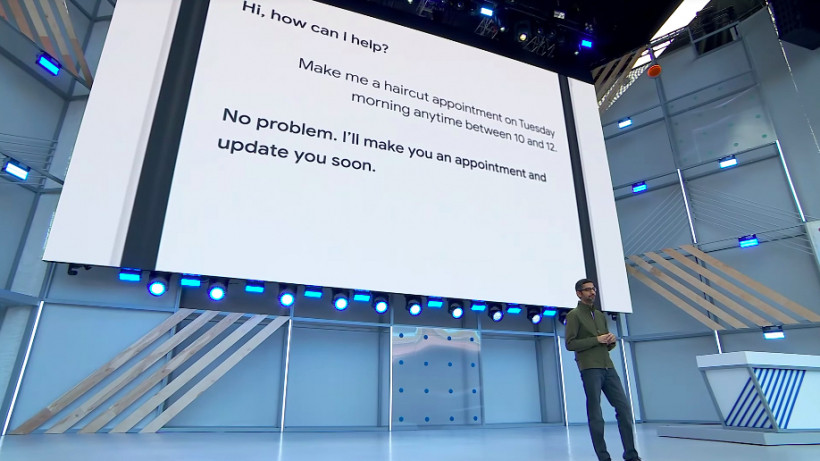

Back at Google’s I/O 2018, CEO Sundar Pichai showed off an advanced application of the company’s AI called Google Duplex, a feature that lets Google Assistant make phone calls on your behalf. No, it’s not going to send someone your pre-recorded message – it’s going to actually have a conversation with someone else for you.

We saw two different demos showing how Assistant could call small businesses to book appointments on behalf of the user – the first for a hair salon appointment and the second to book a table at a restaurant. Google’s Assistant not only managed to follow basic conversational maxims, but even used “umms” and pauses to emulate human speech patterns. It was impressive, yet creepy, amazing and still questionable.

Google acknowledged that at the time, as Google Home VP Rishi Chandra told The Ambient last May that the company didn’t know how some of this would work in practice, the company has slowly figured it out.

What’s actually happening here?

Duplex brings together various AI technologies that Google’s been working on for years, culminating in its most human application yet. The aim of Duplex, says Google, is to automate calls to businesses and book appointments on your behalf, so you don’t have to pick up the phone. In the first demo we saw, Google Assistant called a hair salon to book an appointment, while the receptionist was apparently unaware that she was speaking to an algorithm.

This isn’t something you’ll be using to call your friends and family, but for offloading the task of making bookings. Pichai said that 60% of small business in the US don’t have an online booking system – and Google sees an opportunity.

Duplex is powered by Google’s WaveNet natural speech generator and has been trained on what Google calls “closed domains”. Essentially, Duplex doesn’t know how to talk to your parents, but it knows how to speak naturally when booking a haircut or ordering a plumber.

Duplex also has a self-monitoring capability, which means that should it get into a more complex exchange that it can’t handle, it can tag in a human operator to do the job. Whether or not the Assistant will be supervised come the time Duplex goes public remains to be seen, but Google’s keeping a human eye on it during its training.

Is this legal? Is it ethical?

Those are the two big questions that Google has had to answer since the debut of Duplex. First, many states in the US require consent before recording a phone call. Second, do people have a right to know that they’re not talking to a person, and instead an AI?

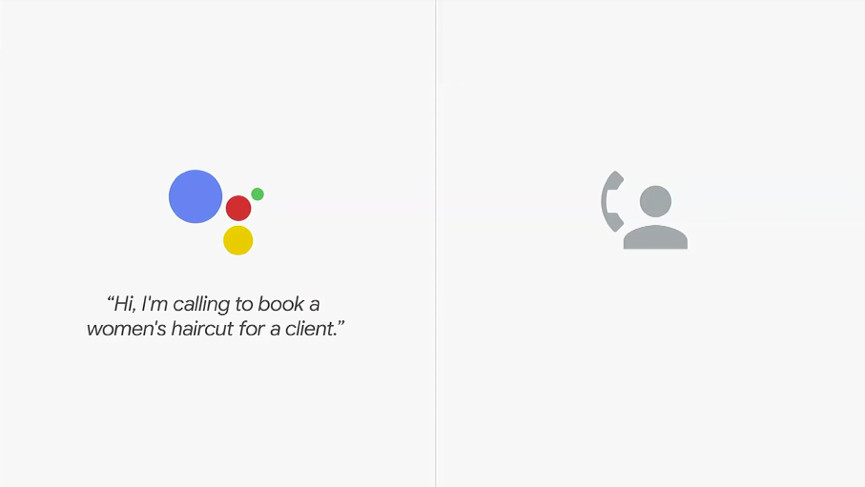

Google has clearly been listening to these concerns. In June 2018, it published the below video, which shows Duplex calling a restaurant to make a reservation. However, in this demonstration, Duplex announces itself first and tells the host that the call will be recorded. It also interjects with some “Uhhs” and “Mmhmms” to make it sound more human. Take a look – it paints a clearer picture of Duplex than the I/O demo.

But there’s also the obvious question of whether businesses will be happy dealing with a robot. We can’t see any scenario where this technology will be perfect from day one; we can only imagine how frustrating a scrimmage with a confused Assistant could be for someone whose day is busy enough without having to wrestle with AI. Furthermore, even if the person knows they’re talking to a robot, who’s to say they’ll want to, no matter how human-like it sounds?

But there are positives to remember, too. For people who are elderly or disabled, Duplex is an entirely new assistive technology that could be incredibly helpful.

When will Duplex roll out?

After a small initial beta test, Duplex rolled out to a small number of users in New York, Atlanta, Phoenix and the San Francisco Bay Area. Now it’s getting a larger expansion, as Pixel users in 43 states will have access to Google Duplex.

The seven states not included in the rollout of Duplex are Kentucky, Louisiana, Texas, Minnesota, Montana, Indiana and Nebraska. The reason is down to Google having to work through both local and state laws.

The rollout is limited to Pixel users for now, but will soon expand to Android and iOS users. Smart Display and Google Home users will have to wait a little longer until they can have Assistant book their haircuts and such.

However, the rollout is still a bit of a surprise. There’s still quite a lot we don’t know. What if it reaches an automated responder and suddenly in a robot face-off? What about the potential for abuses of this technology? We imagine answers to these questions, and more, will become clearer once Duplex has completed its initial conversations in the public arena.

However, at least for for now, those able to access the service won’t be getting the full experience anyway. Duplex is restricted to restaurant bookings, and will only be able to make English-speaking calls. Some restaurants will also be unavailable (for reasons that Google hasn’t really clarified), and, as we’ve said, this is just for Pixel phones initially.

“We’re still developing this technology and we actually want to work hard to get this right, get the user experience and the expectation right for both businesses and users,” Pichai said at I/O 2018. “But done correctly, it will save time for people and generate a lot of value for businesses.”

Perhaps unsurprisingly, then, Duplex isn’t standalone to begin with, and is instead folded into Google Assistant. Businesses in the initial states and future ones will also have the choice to opt out of Duplex, as the bot will announce it is a bot at the beginning of the call.

Of course, it’s unclear at this stage how long it’ll take for the service to roll out completely. Google is naturally recording all the Duplex calls in its controlled rollout, as it looks to improve its shortcomings, and hopefully we’ll be treated to the full experience sooner rather than later.