Got yourself on Amazon Echo and wondering how to listen to free music without paying for a music subscription service? We have you covered.

Amazon

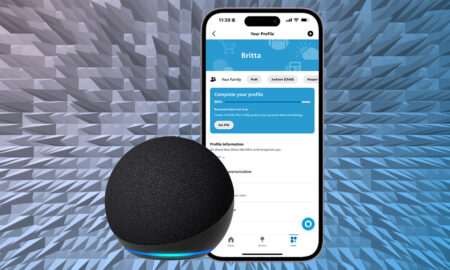

You can setup Household Profiles to use multiple accounts on Alexa, and setup Voice ID for a more personalised experience. Here’s how.

A quick guide to keeping your Alexa smart home organized and well managed The smart home tech linked to your…

Alexa is so much more than just a weather and trivia guru. Of course, you can ask your Alexa smart…

Amazon’s Echo can be connected to an external Bluetooth speaker, and your phone. Here’s how.

Transform your cleaning routine with Roomba and smart speakers. Connect Roomba to Alexa or Google Assistant for hands-free cleaning.

Wondering how to connect Spotify to Alexa? We have you covered with this step-by-step guide.

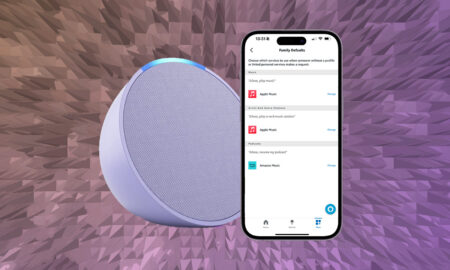

Wondering how to setup and use Apple Music with Alexa on Echo speakers and through Fire TV. We have you covered.

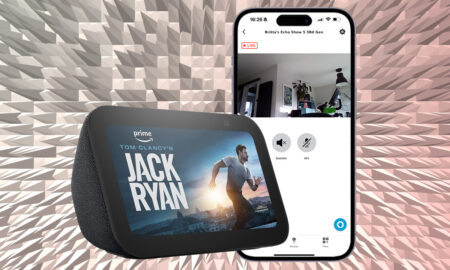

You can remotely view your Echo Show’s camera feed to check in on your home. Here are the steps to follow.

Alexa has several tricks to help you sleep, from sleep sounds to setting a sleep timer. Here’s how to use Alexa for a better night’s sleep.

If you have an Amazon Echo, this is how to turn it into a top alarm clock and set up a morning Alexa Routine.

Here’s what you’ll need to turn Alexa Song ID on or off and how you do it so you always know what song is on.

This step-by-step guide covers how to connect an Amazon Echo device to Wi-Fi, and how to change an existing Wi-Fi network.

Alexa can get real creepy at times, saying weird stuff or cackling for no reason. And the internet loves it…

Wondering to adjust the time on your Echo device? We have you covered in this step-by-step guide.

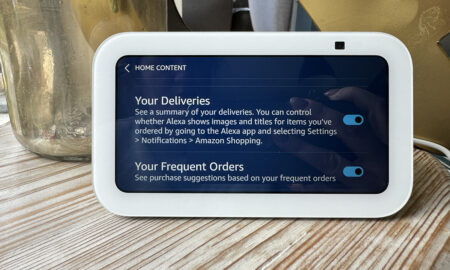

A step-by-step guide on how to make your Echo Show display your upcoming Amazon deliveries – or stop displaying them.

Make Alexa bilingual Amazon’s Alexa doesn’t just know everything there is to know about the weather, general knowledge and what’s…

Audio Summary Amazon Echo Hub: Introduction On a smart home focused site such as this, it’s hard to remember that…

Make Alexa use a different music service on Amazon Echo It will probably come as no surprise to many that…

Amazon has a number of Echo devices so picking the right one for your home is no easy feat. The…